Persistent Workers

Persistent Workers¶

Introduction¶

Cirrus CI pioneered an idea of directly using compute services instead of requiring users to manage their own infrastructure, configuring servers for running CI jobs, performing upgrades, etc. Instead, Cirrus CI just uses APIs of cloud providers to create virtual machines or containers on demand. This fundamental design difference has multiple benefits comparing to more traditional CIs:

- Ephemeral environment. Each Cirrus CI task starts in a fresh VM or a container without any state left by previous tasks.

- Infrastructure as code. All VM versions and container tags are specified in

.cirrus.ymlconfiguration file in your Git repository. For any revision in the past Cirrus tasks can be identically reproduced at any point in time in the future using the exact versions of VMs or container tags specified in.cirrus.ymlat the particular revision. Just imagine how difficult it is to do a security release for a 6 months old version if your CI environment independently changes. - Predictability and cost efficiency. Cirrus CI uses elasticity of modern clouds and creates VMs and containers on demand only when they are needed for executing Cirrus tasks and deletes them right after. Immediately scale from 0 to hundreds or thousands of parallel Cirrus tasks without a need to over provision infrastructure or constantly monitor if your team has reached maximum parallelism of your current CI plan.

What is a Persistent Worker¶

For some use cases the traditional CI setup is still useful since not everything is available in the cloud. For example, testing hardware itself or some third party devices that can be attached with wires. For such use cases it makes sense to go with a traditional CI setup: install some binary on the hardware which will constantly pull for new tasks and will execute them one after another.

This is precisely what Persistent Workers for Cirrus CI are: a simple way to run Cirrus tasks beyond cloud!

Configuration¶

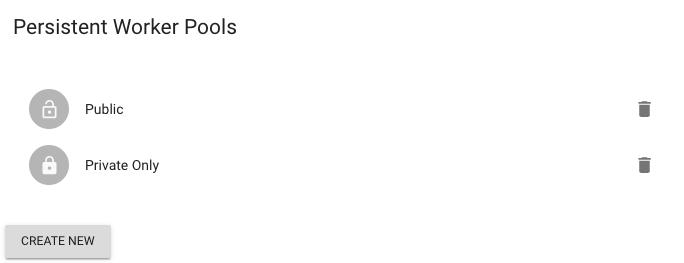

First, create a persistent workers pool for your personal account or a GitHub organization (https://cirrus-ci.com/settings/github/<ORGANIZATION>):

Once a persistent worker is created, copy registration token of the pool and follow Cirrus CLI guide to configure a host that will be a persistent worker.

Once configured, target task execution on a worker by using persistent_worker instance and matching by workers' labels:

Or remove labels filed if you want to target any worker:

Resource management¶

By default, Cirrus CI limits task concurrency to 1 task per each worker. To schedule more tasks on a given worker, configure it's resources.

Once done, the worker will be considered resource-aware and will be able to execute concurrently either:

- one resource-less task (a task without

resources:field) - multiple resourceful tasks (a task with

resources:field) as long worker has resources available for these tasks

Note that labels matching still takes place for both resource-less and resource-aware tasks.

So, considering a worker with the following configuration:

It will be able to concurrently execute one of this task:

task:

name: Test iPhones and iPads

persistent_worker:

resources:

connected-iphones: 2

connected-ipads: 2

script: make test

And two of these:

Isolation¶

By default, a persistent worker spawns all the tasks on the same host machine it's being run.

However, using the isolation field, a persistent worker can utilize a VM or a container engine to increase the separation between tasks and to unlock the ability to use different operating systems.

Tart¶

To use this isolation type, install the Tart on the persistent worker's host machine.

Here's an example of a configuration that will run the task inside of a fresh macOS virtual machine created from a remote ghcr.io/cirruslabs/macos-ventura-base:latest VM image:

persistent_worker:

isolation:

tart:

image: ghcr.io/cirruslabs/macos-ventura-base:latest

user: admin

password: admin

task:

script: system_profiler

Once the VM spins up, persistent worker will connect to the VM's IP-address over SSH using user and password credentials and run the latest agent version.

Container¶

To use this isolation type, install and configure a container engine like Docker or Podman (essentially the ones supported by the Cirrus CLI).

Here's an example that runs a task in a separate container with a couple directories from the host machine being accessible: